When relational databases, data warehouses, and data marts took root in the late 1990s, our data and our database systems were just down the hall in a rack sitting in a server room. Our data resided on our own property and in server rooms we controlled. While we had physical control over our data, we were tightly bound to the software vendor's proprietary software/hardware systems, storage formats and SQL dialect of provided by the database vendor. Everything from SQL dialect to storage formats where at the time much less standardized than what we have today.

Proprietary Storage Engines

We were entrusting our data storage and query engine interfaces to a software vendor (at the time Oracle, Sybase, IBM Informix, SQL Server...etc). Our data was in a proprietary storage format controlled by the database software vendor and we are their mercy of future support and licensing for continued access to our data.

Colocation and Hosting

As the internet grew, along came specialized hosting data centers. We moved our OLTP databases, OLAP data warehouses and our app servers to secured cages sitting in a remote climate controlled and network optimized shared complex. We owned the hardware but the software managing the data was still in proprietary storage formats from the big database vendors like Oracle, SQL Server, Sybase...etc. With this transition our data moved a bit further from our control, since we were giving up some physical control and access to the infrastructure for the benefits of colocation.

Moving to the Public Cloud

Then came the public cloud and infrastructure as a service. We moved our database systems on to virtual hardware and managed storage and networking controlled by a cloud provider. We no longer own or control the physical infrastructure or managed a physical space in a data center. This had many benefits with provisioning infrastructure, remote/automated management and brought the virtually unlimited incremental scalability of cloud compute and storage. However, our data is still locked up in proprietary storage engines. Either we are using up our own Oracle or Teradata software licenses on virtual machines in the cloud or we are using more cloud native data warehouse services such as Redshift or BigQuery.

Why does all this history matter? The less control we have over our data systems, the more restrictions we will have on future opportunities for using the raw data, metadata and related processing logic (e.g. SQL, DML, UDFs....etc) and not to mention manage costs and licensing. When your data is stored in a vendors storage engine (Oracle, Teradata...etc) your data is stored in their proprietary format and you are typically limited to using only their query engine and tooling to access your data.

When your servers are in a remote site outside your property you rely on the data center for security and management. Then when you host your server in the public cloud there are additional layers of software involved from compute/storage virtualization to shared infrastructure services. These all add to the loss of control over your data and your ability access your data and utilize it without paying a service fee of some kind. This loss of control can mean lack of options (end of life scenarios for example) that impact portability, scalability and managing costs overall.

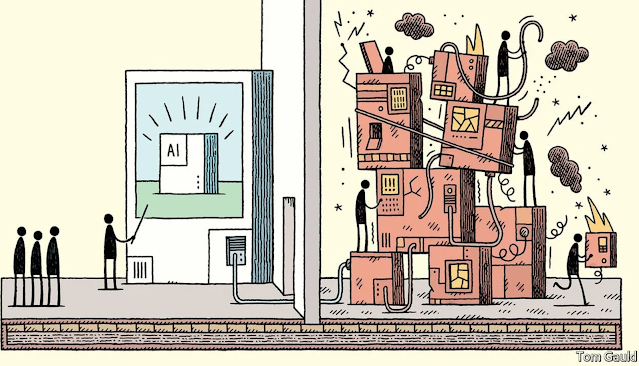

The Rise of Cloud Data Warehouse Vendors

Being dependent on a cloud provider and database software vendors (sometimes one in the same) in how you use your data needs to be front of mind in your cloud data warehouse architecture. While it is not practical to have 100% portability from cloud infrastructure providers or from your software vendors, one needs to consider how best to leverage open source and storage standards and keeping the door open to hybrid cloud options (or cloud provider portability) whenever possible when it comes to your data platform. I am a firm believer in making these conscious decisions upfront or you will just be repeating history of the last two decades of Teradata, Netezza and Oracle type lock-in, like many enterprises are trying to unwind today.

The lock-in scenarios with proprietary data warehousing storage engines has not changed much with the public cloud providers. Data warehouse engines such as Redshift and BigQuery still store the data in proprietary formats. They offer much greater data integration flexibility with other cloud services than legacy data warehouse vendors do, but you are still at the mercy of their proprietary storage.

Does Your Cloud DW Reside in Your Cloud Account?

There are now other newer players in the cloud data warehousing space with the leader being Snowflake and others coming online to provide Data-as-a-Service solutions. With SaaS data warehousing providers now your data is residing in S3 but is controlled by a data systems SaaS provider such as Snowflake in a different AWS account (or different Azure account). This does not bring you any more control over your data. With the SaaS data-as-a-service vendors, you are still at their data mercy and lock-in and even worse your data is not residing in your cloud account. It is one thing to have your data in the public cloud, it is another to have your data in another AWS account. This can be fine for for some enterprises, but this needs to be clearly understood that with solutions such as Snowflake, what kind of control (for better or worse) you are delegating to your database vendor.

So what is the solution to this lock-in?

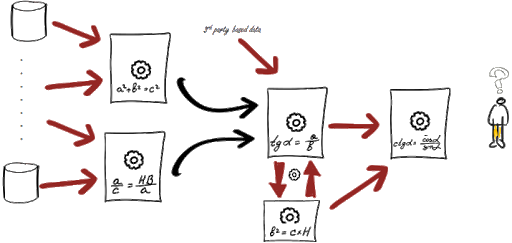

Big Data with open source Hadoop attempted to address the proprietary lock in problem. In the late 2000s, Hadoop took off and started to at least put some of your data into open standard data formats and on commodity hardware with less vendor lock-in (to a fair degree). While Hadoop had its challenges that I won't get into here, it did usher in a new era of Big Data thinking and a Cambrian like explosion of open source data technology and democratization. Data specs such as ORC, Avro, Parquet and distributed file systems such as HDFS gave transparency to your data and modularity to managing growth and costs. You no longer depended exclusively on proprietary data storage engines, query engines and storage formats. So with Hadoop at the time we could claim to gaining some degrees and freedom and improved control over our data and software.

Well now that on-premises Hadoop is dying off (it is dead for the most part) and cloud storage engines and data lakes are taking over. Some of these cloud native storage solutions and data lake storage engines in the cloud have largely adopted the many open data standards of Hadoop (Parquet, Avro, ORC, Snappy, Arrow....etc). These cloud native data lake house products can keep you close to your data. Solutions such as Athena, Presto, and managed Databricks let you manage your data in open data formats and while storing the data on highly elastic and scalable cloud object storage.

However, other cloud data warehousing vendors have emerged and bringing back the lock-in, meaning your data resides outside your cloud account and in proprietary storage and with proprietary query engines.Vendors such as Redshift, Snowflake, BigQuery and Firebolt each have pros and cons with the type and level of lock-in they impose.

It's All About the Data Lake

Many of these engines to offer descent integration with open standards. For example Redshift, Snowflake and BigQuery all for example do allow fairly easy ingestion and export to open data standards such as Parquet and ORC. Lock-in is not a bad thing if the solution rocks and is cost effective in the long-term. Sometimes specialized proprieties compression and unique architectures do things not possible with open standards of the present day. You be the judge. Or just let your successor in four years deal with it :)

The one bit of advise I would give when building a cloud data platform, is to always base your architecture on a data lake house foundation using open data storage standards, elastic cloud storage and a distributed SQL query engine. Your choice of Redshift, Snowflake, BigQuery and other downstream storage engines and other downstream analytics are critical but secondary.